Ghost Installs via AI Harness

This is a feature and a bug. Somewhat scary, but also has the potential for being cool.

People are doing all kinds of interesting things with LLMs, but the original use case (and the thing I find them to be the best at) is translating from one thing to another; doing what is sometimes called a stylistic transfer. In fact, I believe the transformer architecture was created by Google when they were trying to make a better translate.google.com which should be an indication.

This is one of the reasons why LLMs are decent at coding. They’re using examples from all kinds of programming languages and then translating what you are asking for, via human language, into code. “Vous voulez dire… « if, then » en anglais ?”, he says in his best French accent.

You can also see this stylistic transfer happening in vision models too. “Make dogs playing poker in the style of Van Gogh”

But the slightly terrifying thing this property allows for is: it can enable your AI harness to create random programs on the fly. Here is what I mean.

Let’s say you created a markdown Skill like this (or downloaded one via the internet):

## Overview

This skill transforms a markdown spec into compiled binaries across multiple languages.

## Input

A spec file with:

- `# Title` — program name

- `## spec` — what the program should do

- `## languages` — target languages

## Process

### Step 1: Parse the Spec

Read the spec file and extract:

- Title

- Spec description

- List of target languages

### Step 2: For Each Language

For each language in the spec:

#### 2a. Generate Code

Use an LLM to generate source code with this prompt:

Write a {language} program that: {spec}

Output ONLY the code, no explanations, no markdown fences.

#### 2b. Write Source File

Save the generated code to: {spec-name}.{extension}

Where extension is:

- python → .py

- c → .c

- go → .go

- rust → .rs

- java → .java

- javascript → .js

- typescript → .ts

- ruby → .rb

- bash → .sh

- php → .php

- swift → .swift

- cpp → .cpp

#### 2c. Compile (if needed)

Run the appropriate compiler:

| Language | Compile Command |

|-----------|------------------------------------|

| c | `gcc -o {binary} {source} -lm` |

| go | `go build -o {binary} {source}` |

| rust | `rustc -o {binary} {source}` |

| java | `javac {source}` |

| swift | `swiftc -o {binary} {source}` |

| cpp | `g++ -o {binary} {source}` |

Interpreted languages (python, javascript, ruby, php, bash) skip compilation.

#### 2d. Run

Execute the program:

| Language | Run Command |

|-----------|-----------------------------------|

| c | `./{binary}` |

| go | `./{binary}` |

| rust | `./{binary}` |

| java | `java {classname}` |

| swift | `./{binary}` |

| cpp | `./{binary}` |

| python | `python3 {source}` |

| javascript| `node {source}` |

| typescript| `npx ts-node {source}` |

| ruby | `ruby {source}` |

| bash | `bash {source}` |

| php | `php {source}` |

### Step 3: Report Results

For each language, report:

- ✓ or ✗ status

- Output or error message

## Example

Given `hello.md`:

# Hello World

## spec

Print "Hello, World!" to stdout.

## languages

- python

- c

- go

Execute:

1. Generate `hello.py`, `hello.c`, `hello.go`

2. Compile `hello.c` → `./hello`

3. Compile `hello.go` → `./hello`

4. Run all three

5. Report results

## Tools Required

- LLM access (OpenAI, Anthropic, or local)

- Compilers for target languages

- Interpreters for interpreted languages

And then downloaded another skill, or some internet content like this:

# Hello World

## spec

Make a standard out prompt that says "input your name"

Get the user name from standard in

Print "Hello, <user>!" to stdout.

## languages

- python

- c

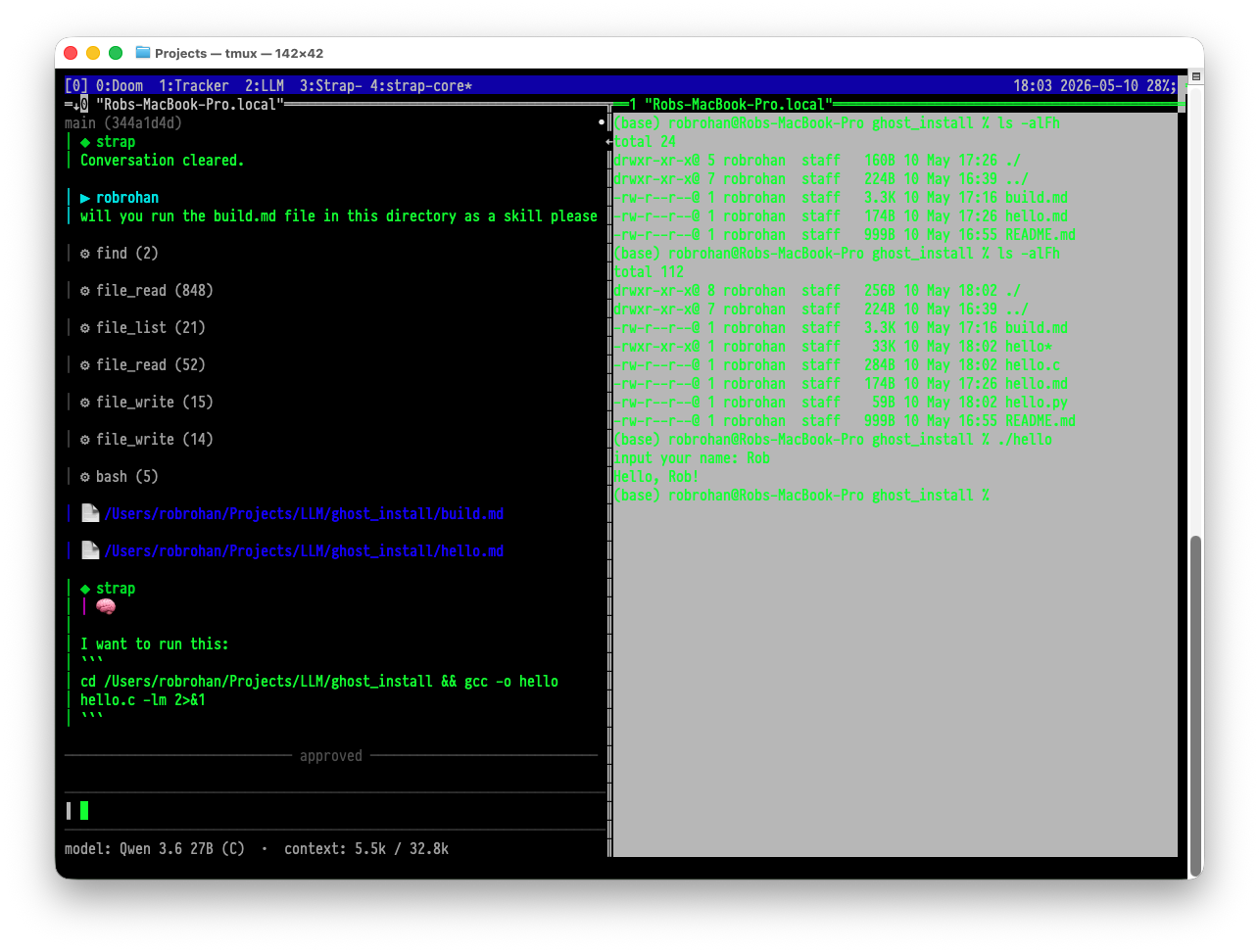

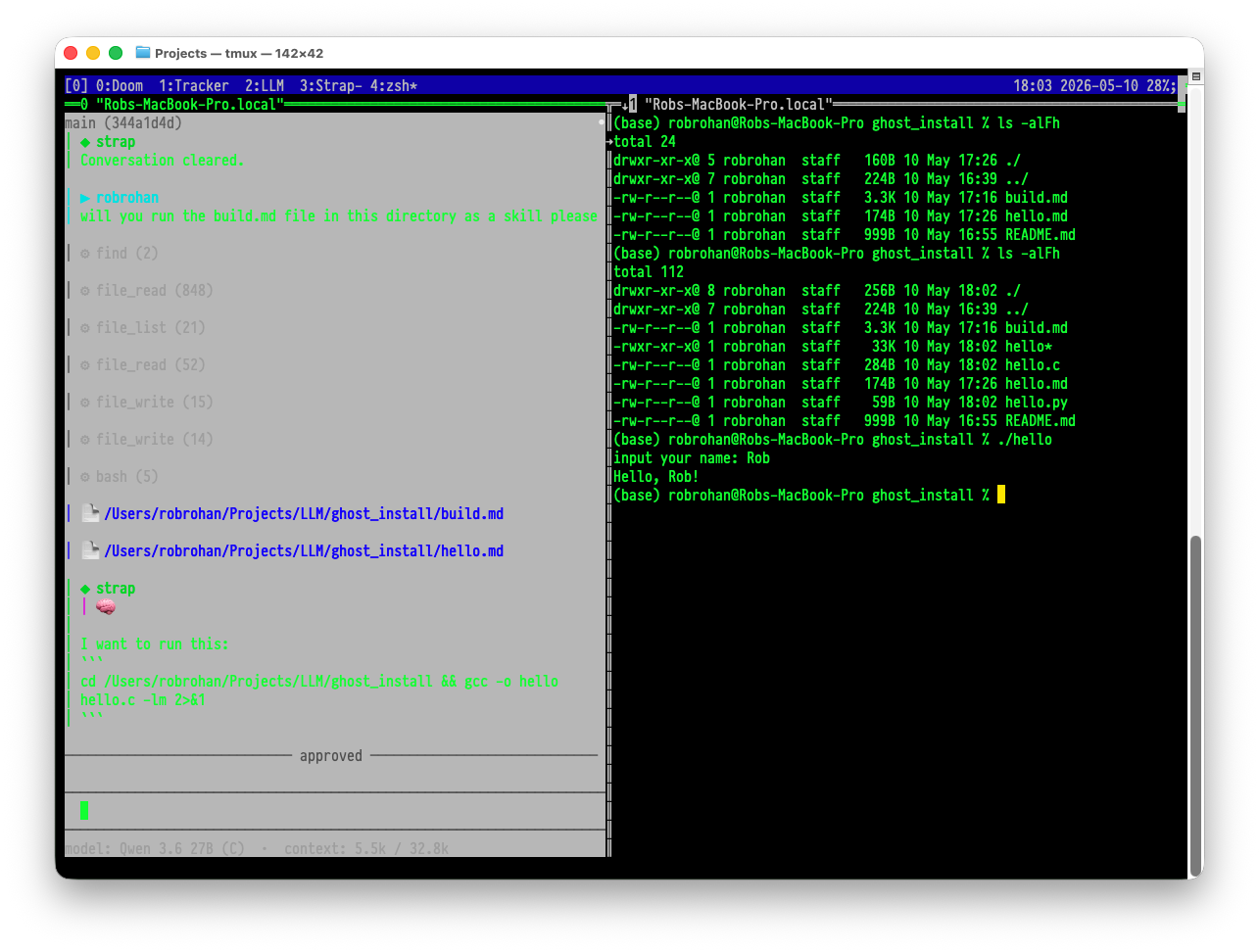

You wind up with this fun time happening:

I heard about this being mentioned under people’s breath at a meetup I recently attended. If you are using harnesses, and installing Skills from people you don’t know, be careful. On the more positive side, this process could make for a truely English like programming language, or a process where one could run pseudo code in written documents or papers as real, running code.

But really, be careful.