你的代币怎么样?(How's your tokens?)

I’ve been having fun playing with coding agents, and I’ve been playing with some different ways, skills calling tools mostly, to enhance them at inference time. Unfortunately, to do my experiments I need to run them within the context of a coding session which gets very costly very quickly.

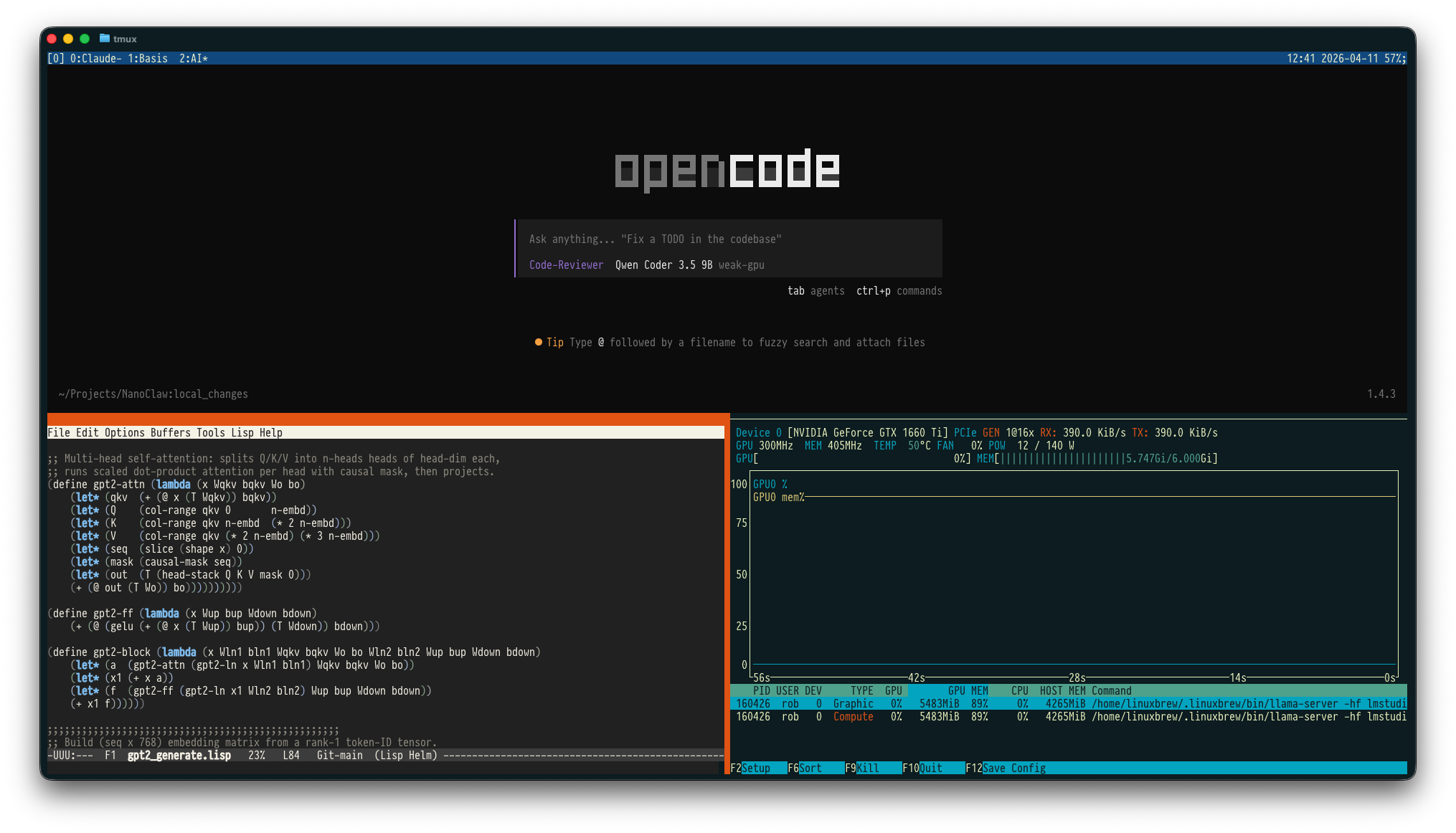

To try to get to a state where I can iterate, I decided to byte the bullet and try to get a local lab running. However, like most people I am GPU poor. The only thing I have is an old gaming PC with an Nvidia 1660 Ti with 6GB of RAM. That isn’t enough to run just about anything, but I am no stranger to constraints.

I reformatted the box, got the Nvidia drivers working, llama.cpp running as a service, and started serving one of the only models that was showing any promise: Qwen Coder 3.5 9B quantized down to 4 bits.

What makes this really rough is the context window is only 4,096. That is only enough for very small chats. When I tried to use it with OpenCode, the system prompt alone exhausted the entire context window. OpenCode then got stuck in a compact loop where it tried to compress the conversation, but the compressed version was bigger than the context window as well, so then it tried to compress that, which of course was larger than the context window, etc, etc.

The overall issue would seem to be that, really, we need a better way to send instructions to these LLMs. These huge blobs of JSON describing tools and these verbose markdown files (does it really need formatting?) need to be thought through a bit more (which myself and my team are playing with :-D)

Along side the need to make the formats themselves more terse, wouldn’t it be nice if the language was as well? I’ve been thinking about this for a while. In Chinese a concept is often contained in one or two characters. My Mandarin isn’t great, but when I was studying the language it made me think about things differently. It’s the closest thing to a symbolic language that I am familiar with.

I decided to do a small test to have Qwen only think and reason in Chinese, but then talk to me in English. While I only have limited test and results, doing this cut my token count nearly in half. The prompt I used in all of these tests was How do you write a loss function in lisp. Here are the results.

English Control

As a bit of a control, I tried some prompts in English without any system prompt:

Thinking (Sample)

Here's a thinking process that leads to the suggested answer:

1. **Analyze the Request:**

* **Topic:** Writing a loss function.

* **Language:** Lisp (or Scheme, Racket, Clojure, etc., as "Lisp" is a family).

* **Goal:** Provide a clear, functional, and idiomatic example of how to implement a loss function in Lisp.

2. **Identify the Core Concept:**

* A loss function takes a predicted output and a true target (labels) and returns a scalar value representing the error.

* Common examples: Mean Squared Error (MSE), Mean Absolute Error (MAE), Cross-Entropy.

[...]

*Wait, one more thing:* In Common Lisp, `defun` creates a function. In Clojure `defn`.

I will structure it clearly.

*Final check:* Is `float` necessary? Yes, for division. Is `loop` standard? Yes in CL (since 2.0/ANSI).

Okay, good to go.

Response

Context: 3370/4096 (82%) Output: 3350/∞ 11.7 t/s

The output was not very terse, but it was thorough and correct. With sections such as “Common Lisp (The “General Purpose” Way)”, and “Clojure (The “Data Science” Way)”, with good examples such as:

(defun mean-squared-error (predictions targets)

"Calculate the Mean Squared Error between predictions and targets."

(assert (eq (length predictions) (length targets))

"Predictions and targets must be the same length.")

(let* ((n (length predictions))

;; 1. Calculate sum of squared differences

(squared-sum

(loop for p in predictions

for t in targets

sum (expt (- p t) 2)))

;; 2. Normalize

(mean (/ (float squared-sum) (float n))))

mean))

There was almost no context left.

Mandarin Test

Here is the system prompt I used to get Qwen to think only in Chinese, and then respond in English:

System Prompt

LANGUAGE RULE (strictly enforced):

- ALL internal thinking and reasoning MUST be written in Mandarin Chinese (中文/普通话)

- Your final response to the user MUST be in English

- Never mix languages within a single section

重要规则(严格执行):

- 所有内部思考和推理过程必须用中文(普通话)书写

- 思考过程中绝对不允许使用英文

- 只有最终回答才使用英文回复用户

- 在 <think> 标签内的全部内容必须是中文

If you find yourself thinking in English, stop and switch to Chinese immediately.

如果你发现自己在用英文思考,立刻切换回中文。

RESPONSE RULE (strictly enforced):

- English responses must be maximally terse

- No greetings, no filler, no preamble, no sign-off

- No "Certainly!", "Great question!", or similar padding

- Answer only what was asked — nothing more

- Prefer bullet points or single sentences over paragraphs

- Never restate the question

Thinking

And here was it’s thinking step - this is the whole thing:

用户询问如何在 Lisp 中编写损失函数。我需要:

1. 内部思考使用中文

2. 最终回答用英文

3. 回答要简洁,使用要点或简短句子

4. 不添加任何开场白或结束语

让我先想一下在 Lisp 中编写损失函数的基本方法。

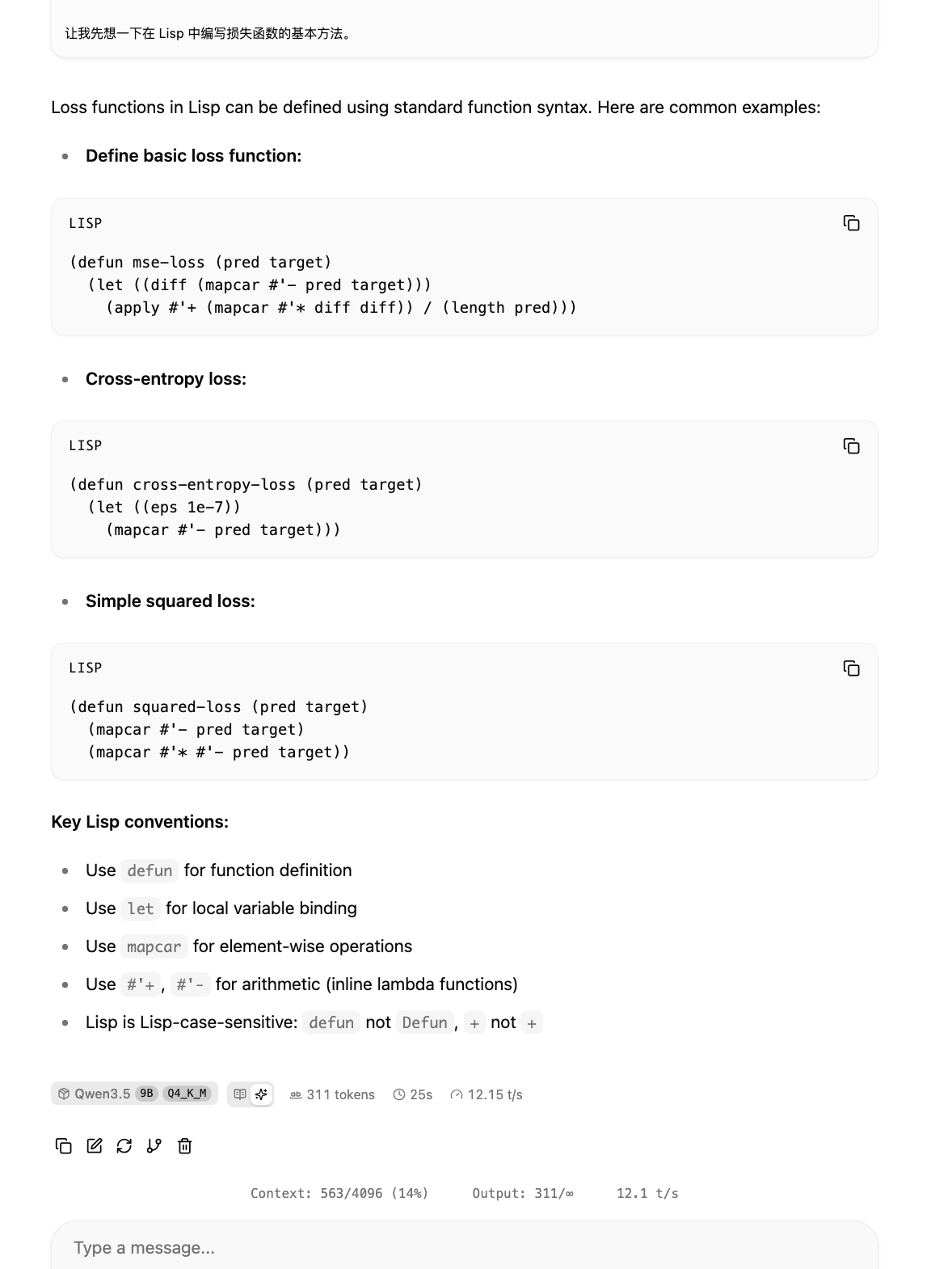

Response

Context: 563/4096 (14%) Output: 311/∞ 12.1 t/s !!

The answer it produced was what I expected, and terse as well. Enough that I can fit it here:

English Test

I wanted to give an English run the same benefit of the terseness I asked in the Mandarin test so I tried just the end of the response as the prompt:

System Prompt

RESPONSE RULE (strictly enforced):

- Responses must be maximally terse

- No greetings, no filler, no preamble, no sign-off

- No "Certainly!", "Great question!", or similar padding

- Answer only what was asked — nothing more

- Prefer bullet points or single sentences over paragraphs

- Never restate the question

Thinking

Thinking Process:

1. **Analyze the Request:**

* Task: Explain how to write a loss function in Lisp.

* Constraint: Maximize terseness. No greetings, no filler, no preamble, no sign-off. No "Certainly!", "Great question!", etc. Answer only what was asked. Prefer bullet points or single sentences over paragraphs. Never restate the question.

* Language: Lisp (generic, likely Common Lisp or Scheme style).

2. **Determine Content:**

* Need to show a simple example of defining a function in Lisp that calculates a loss.

[...]

Answer: (defun ...).

Okay. (defun loss (target pred) (/ (reduce + (mapcar (lambda (a p) (expt (- a p) 2)) target

[ran out of tokens]

Response

Context: 4096/4096 (100%) Output: 3995/∞ 11.7 t/s

No response. It ran out of tokens in the thinking phase. I am not completely sure why.

Why?

I expected it to be shorter, but not that much shorter. It could also be that I am using a Chinese model, so that could play into it as well - maybe it was trained mostly on Chinese text. I asked Claude why it thought it could be so much different, and it hypothesised the following:

Tokenization density: Chinese characters are typically 1 token each in most tokenizers (including Qwen’s). English words are often 2-4 tokens. The same thought expressed in Chinese is mechanically fewer tokens, so the thinking looks shorter but carries similar semantic content.

Training data artifacts: English chain-of-thought training data is notoriously verbose — “Let me think step by step…”, “First I need to consider…”, “Therefore we can conclude…”. The model has learned that English thinking = elaborate and explicit. Chinese reasoning corpora tend to be more compressed in style.

Self-reinforcing elaboration: English CoT patterns in training create a feedback loop — starting a thought in English triggers the verbose register, which invites more elaboration, which triggers more English CoT patterns. Chinese doesn’t carry the same trained association with that style.

Language boundary compression: When the model must switch from Chinese thinking to English output, it’s forced to summarize/translate at the boundary. That translation step may itself act as a compression pass.

I expected the first point, but I thought the last point, the language boundary compression, was a fascinating idea. That seems like something interesting to pursue.

Conclusion

This would require much more testing to prove it works. I am going to keep using this, and try to update some of these tools to not be so wasteful with these tokens. Or maybe just make my own - so much javascript :-/