Basis - A Lisp Neural Network

Claude Code is absolutely insane. For a builder-of-software-and-crazy-ideas, I am unsure of another way to express how incredibly addictive this tool is.

However, it could very well be that I have fallen down one of the oft described rabbit holes. Or maybe I have succumbed to AI psychosis. At this point I am not sure. Like the man who thought he discovered a new form of mathematics because ChatGPT convinced him, perhaps I am falling into that trap as well. Somebody please let me know if I’ve gone off the rails.

World Model

The current crop of “AIs” do not have a world model. I often describe this to people by saying: let say you’ve read every book about Ireland. You know every small detail. You know where every town and farm is located, you know the language, you know every bit of history. You still have never been there. You still have no idea what a breeze feels like in the evening while sitting by The River Liffey in Dublin. You don’t know what someone will feel like having a Guinness at the brewery.

Another example is that AIs can’t draw a glass of wine filled to the brim because they don’t really understand what a glass is, what wine is, or what full actually means.

Trying to solve this problem is often called having the AI build a world model.

Symbols and Physics

I’ve been toying with this idea for a while now. It seems like you could get pretty far using a mix of symbolic relationships and a physics engine along side the current crop of LLMs. Using the current models for text input, nondeterministic, cosine similarity type things, and “something else” for symbolic and physical world understanding. Something by which a reasoning model could update and shape it’s understanding at inference time.

The idea I’ve been playing with is around trying to make progress on solving that problem. The idea, however, is one of those (said in a Californian surfer tone of voice) “whoa, man wouldn’t it be cool if you mixed X and Y. That would be, like, so cool”. I have this vague idea of how it would work, but to be honest, I am just not smart enough to pull it off. Not by myself anyway.

However, it seems if you smash Claude Code on my face I can come close to building a prototype of it… well, not me… we… or, really, mostly it.

And then here’s the problem - I am pushing to the edge of my understanding, and I know LLMs are sycophantic. I have to couch any perceived progress with, “is this working, or are you convincing me to shave my head and go live in the desert and worship rocks”?

With the AI tools, you can move so fast, and do so many off the wall things, it is genuinely hard to know if you’re just making a bunch of nonsense or actually doing something of interest. Here is what I’ve been doing.

A Neural Network in Lisp

The tip of the idea is to build neural networks in Lisp. Lisp is an old programming language that was all the rage in the 1950s. They even made Lisp Machines - what I would consider similar to GPUs or TPUs of today.

Lisp is a difficult language, but it has this wonderful property called homoiconicity. A little AI summary on homoiconicity:

Homoiconicity is a property of some programming languages where the program’s structure is similar to its syntax, allowing code to be manipulated as data. This means that the internal representation of a program can be inferred just by reading the program itself, making metaprogramming easier.

Essentially, in Lisp, code and data are the same thing. This allows things like programatic modification of data at runtime. The code itself is the execution tree, and also the program data. Remove all the abstractions. For example, you could define a projection matrix like this:

(define make-perspective (lambda (fov aspect near far)

(let* (f (/ 1 (tan (/ fov 2))))

[[(/ f aspect) 0 0 0 ]

[0 f 0 0 ]

[0 0 (/ (+ far near) (- near far)) (/ (* 2 (* far near)) (- near far)) ]

[0 0 -1 0 ]])))

That’s a bit hard to read to the untrained eye, but the thing to note is that the matrix definition itself has (what are called s-expressions) variables that can be modified at any point in time. Additionally, to save the data, well, that is the saved format. Both the execution and data structure are the same and fully modifiable at runtime. You could redefine near and far to anything you wanted - even a lambda - for example.

Currently, after you train a neural network everything is frozen in time. The network can not learn-on-the-fly so to speak. There are several reasons for this, but I was curious if I could play around with modifying things at runtime (somehow).

And, maybe even more importantly, you can add (print x) variables anywhere you want to anything you want which gives you the ability to inspect values as they are processed through a network at inference time. This means it might be possible to trace paths in networks a bit easier. Everything, from the weights to the activation functions, to the attention heads, to the biases is live and modifiable.

On top of all of that, it is incredibly easy to build a knowledge graph at runtime:

(define kb '((dog is-a animal)

(cat is-a animal)

(animal is-a living-thing)

(dog has fur)

(dog has legs)

(car mass 1500)

(dog mass 30)))

; what does dog have?

(print (kb-get 'dog 'has kb))

; => (fur legs)

And, again, this structure is both the program and the data. This could allow for some deterministic data to be attached to tokens, or to verify some logical structures, or used to load symbolic concepts into a physics engine for a reasoning model to run it’s own experiments.

The final end result I’d like to test is this: since data and code are the same in this paradigm could a neural network + bolted on extras improve itself, and then write out it’s own code generationally? For example if you loaded everything, weights and all, into a Lisp file, then run the code and let it improve itself, if you simply saved the newly modified code it would essentially be like creating a new generation.

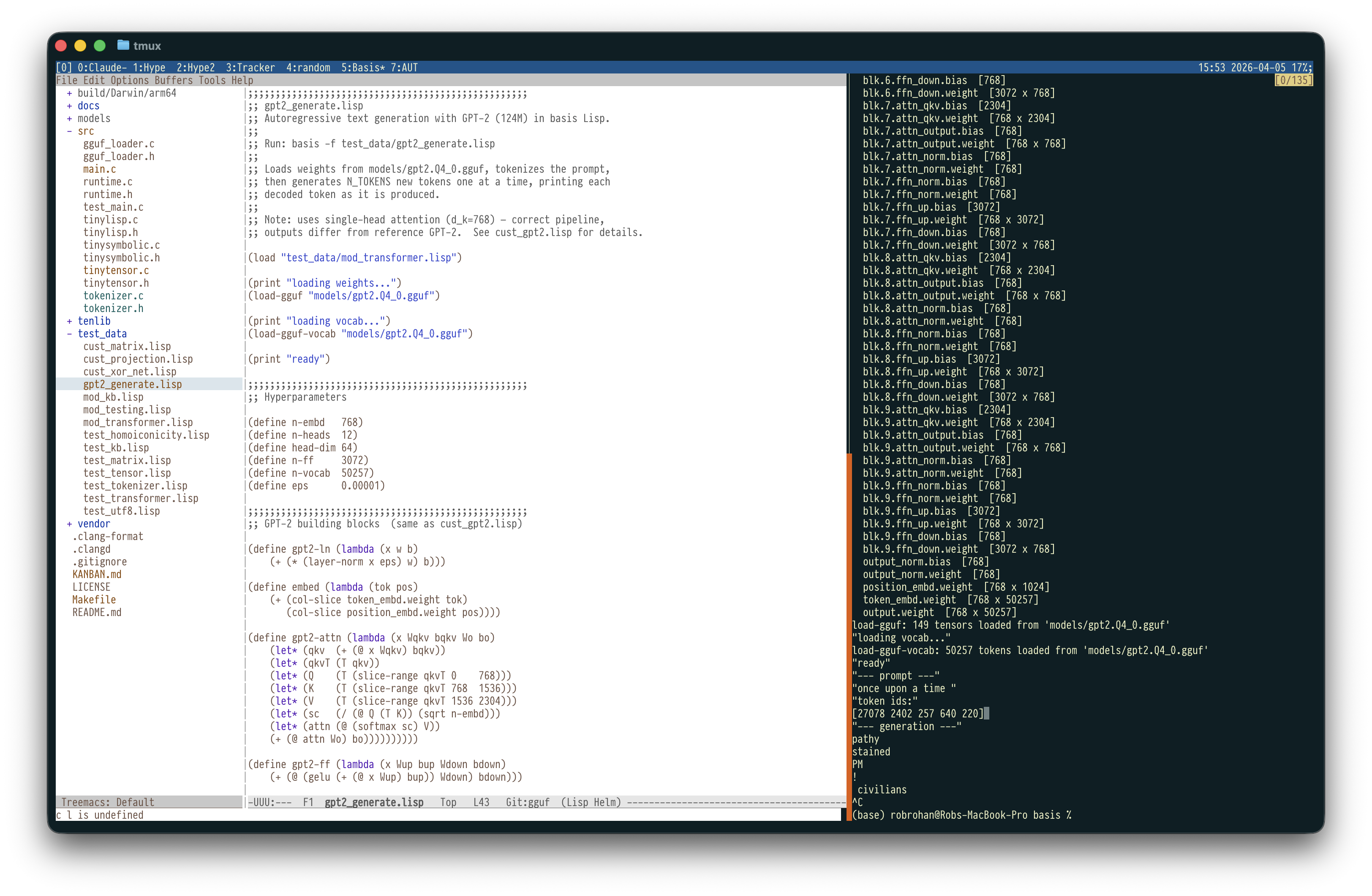

Basis

Long way around, the amazing thing about Claude Code is, I was able to, just about, make a prototype of this in about a week. It still has a ways to go for me to be able to wire all the experiments together, but check out the building blocks that I now have:

- It supports basic tensors vectors and matrices

- It can easily support OpenGL view / projection matrix code at run time

- You can build simple neural networks out of the box

- It can do transformers

- It can load GGUF models, and has enough support to implement and run them.

- It has basic knowledge base support

- It has a REPL

- It supports UTF-8 / Emoji variable names

While this isn’t something that would likely run in production (it’s single threaded, has very simple garbage collection, uses non-gpu based matrix multiplication, and other limitations), it’s shockingly capable.

And while I did write a lot of the fundamental code myself, and used some 3rd party code as well, there is no way I would have been able to build this by myself in a week. It would have taken me a year at best to get this far.

Ignorance hides behind barriers of tradition

Something that will get lost in the coming years: barriers to trying out ideas is going to get low. I don’t know if that is a good thing or a bad thing. The filter of effort usually means that you really have to believe in an idea to get it going. Now, at the drop of a hat, if you know what you are doing, you can churn out prototypes like nobody’s business.

For me, at this point it seems like a wonderful thing. I have learned so much “co-building” this. I have learned a lot about Lisp and how it works, corrected a few misunderstandings I had about transformers and the gguf format, and just a bunch of small random things - like the debate around is (rank [0]) rank 1 or rank 0? Weird little side quests.

While it is totally possible to have Claude Code, ChatGPT, Mistral, etc “do your homework” for you, if you use them instead to dig deeper on your edge of knowledge you can learn so much.

Also, please don’t stop hand coding things.